Category

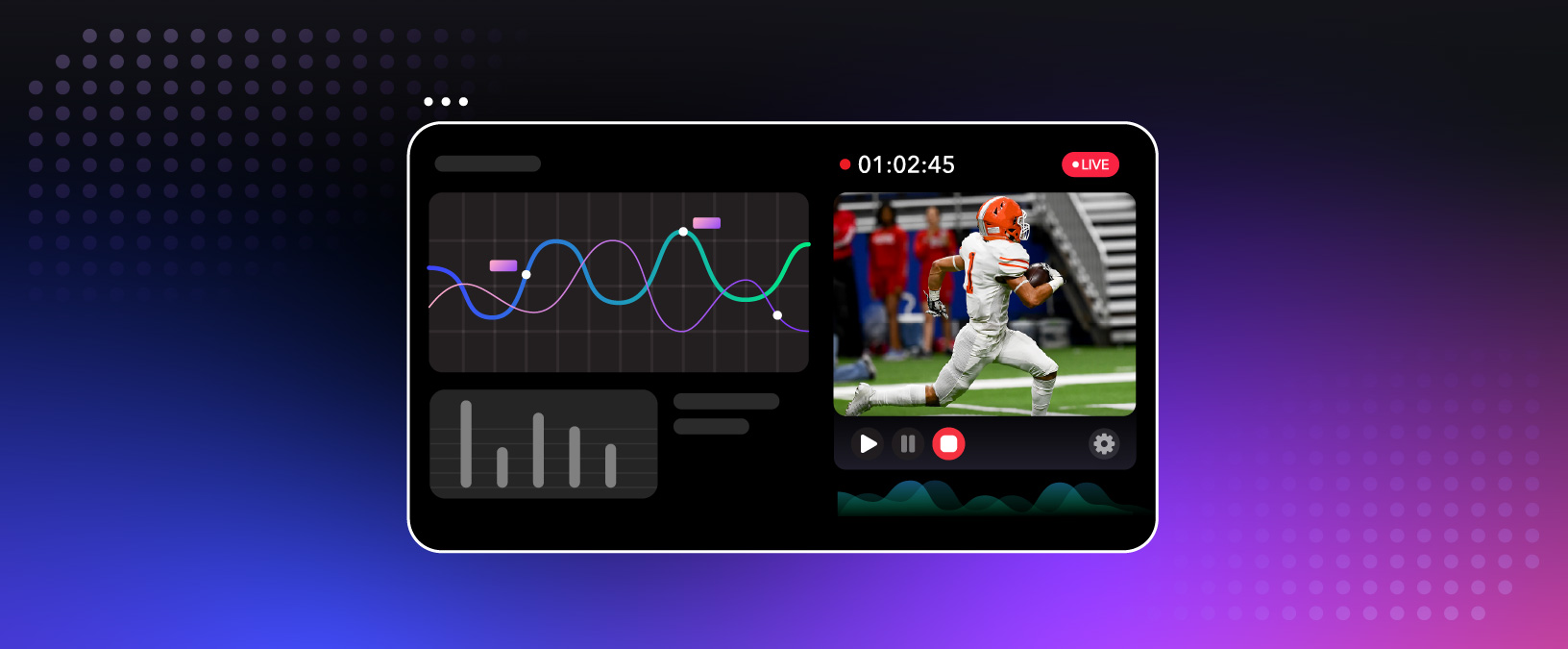

Streaming APIs

Explore the latest product announcements, tutorials, and guides leveraging the power of Dolby.io Streaming APIs to create immersive and interactive experiences.

Streaming documentation

Industry Solutions

Product

Industry Solutions

Industry Solutions

Streaming

Get Started

Drive real-time interactions and engagement with sub-second latency

We are more than just a streaming solutions provider; we are a technology partner helping you build a streaming ecosystem that meets your goals. Get started for free and as you grow, we offer aggressive volume discounts protecting your margins.

Developer Resources

Explore learning paths and helpful resources as you begin development with Dolby.io.